After the leaked benchmarks of the GeForce RTX 4070 12GB in which, Nvidia revealed that the upcoming graphics card will have a performance equivalent in rasterization to the RTX 3080.

As GeForce RTX 4070 12 GB is approaching its launch date, AMD published a new entry in its blog highlighting the importance of higher VRAM, in which AMD is pushing its VRAM advantage to the very core. AMD claims new titles require more VRAM memory than Nvidia already offers.

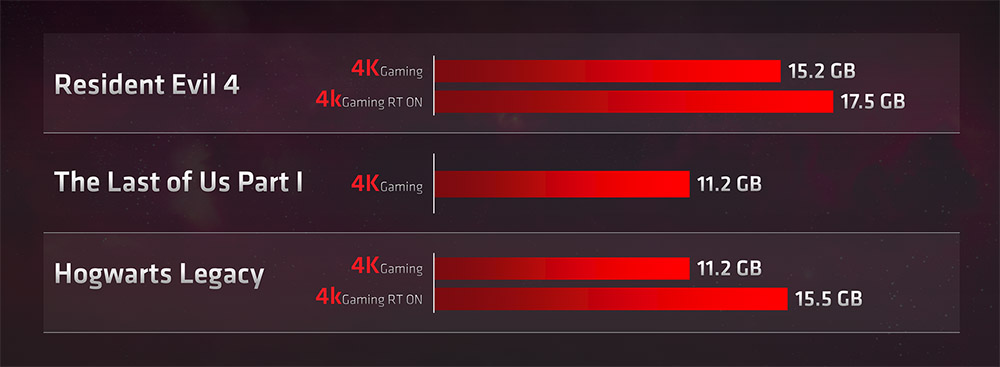

Numerous gamers have been in trouble lately when playing titles like the Resident Evil 4 Remake, Hogwarts Legacy, and The Last of Us Part I, even in 1080p, due to Nvidia graphics cards with 8GB of VRAM (such as the RTX 3060 Ti, RTX 3070, RTX 3070 Ti) or 10GB like the original RTX 3080 (later a 12GB variant was released), they don’t have enough VRAM to enjoy latest games with the highest quality textures and Ray tracing.

The Last of Us Part I does not have ray tracing on PC or PlayStation 5; the textures of the environment in High or Ultra quality are far superior and visually pleasing in comparison with Medium, which is what users of 8GB VRAM graphics cards are forced to use given the less amount of VRAM they have.

No matter how powerful the GPU is or the better the rescaling technique, if VRAM isn’t there, games inevitably tend to crash if the VRAM limit is exceeded excessively, as happens with specific graphics cards in Resident Evil 4 or The Last of Us on PC.

The rumored RTX 4060 and RTX 4060 Ti feature 8GB, and new budget-friendly graphics cards could be in serious trouble since, with DLSS 3, they could quietly play some of these titles in 4K with DLSS 2/3 or FSR2, but their video memory would limit them significantly.

If we look at games that have not been released, Star War Jedi: Survivor has a GPU with 8GB of VRAM as a minimum requirement. Most likely, just like Resident Evil 4 Remake, The Last of Us Part I, or Hogwarts Legacy, a graphics card with 8GB of VRAM will not be enough to activate all the details knowing that it will have ray tracing effects too.

Latest Updates

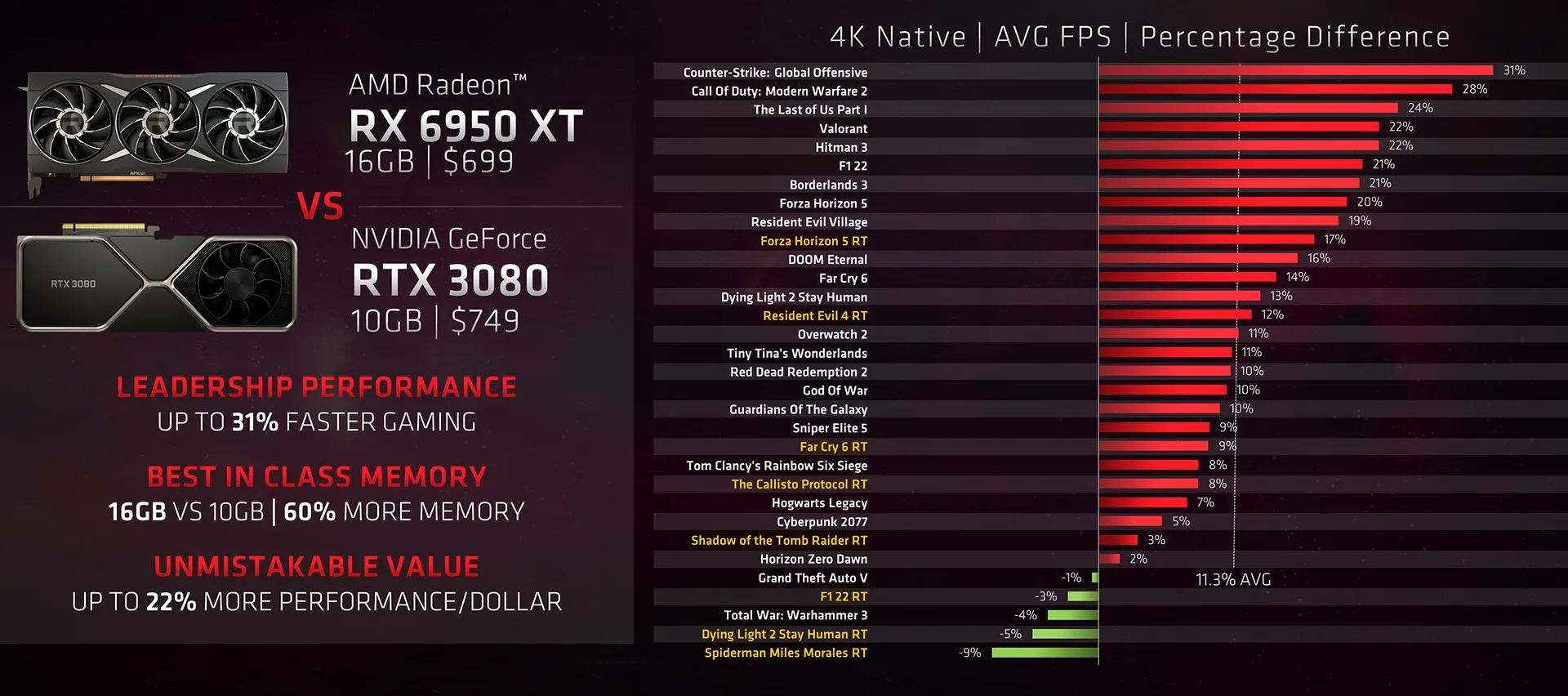

AMD continues demonstrating how gamers can find lower-priced card alternatives with higher rasterization performance (i.e., without activating ray tracing or FSR2) in the games above. At 1440p and 4K resolutions, they all require a lot of VRAM.

Beyond the fact that the memory allocation is different in Nvidia and AMD architectures, in Hogwarts Legacy and the Resident Evil 4 Remake, these can exceed 15GB of VRAM when played in 4K (either with or without ray tracing), an amount of memory that exceeds the 12GB of the RTX 4070 Ti (with performance equivalent to RTX 3090 Ti) and the RTX 4070 which will be launched tomorrow.

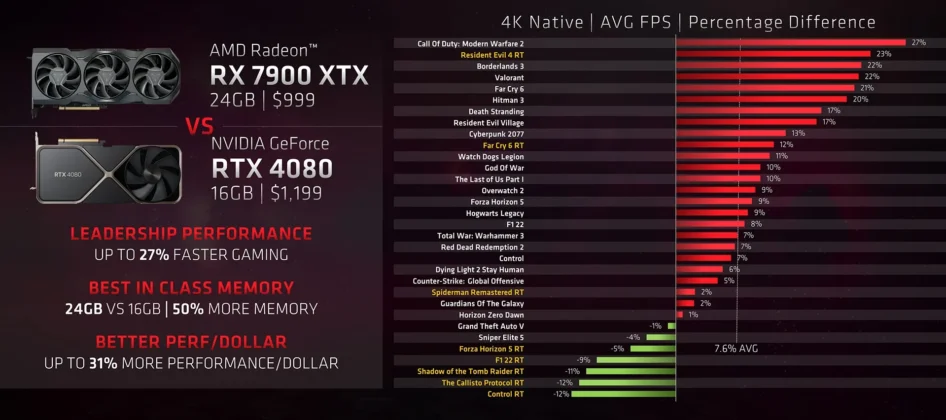

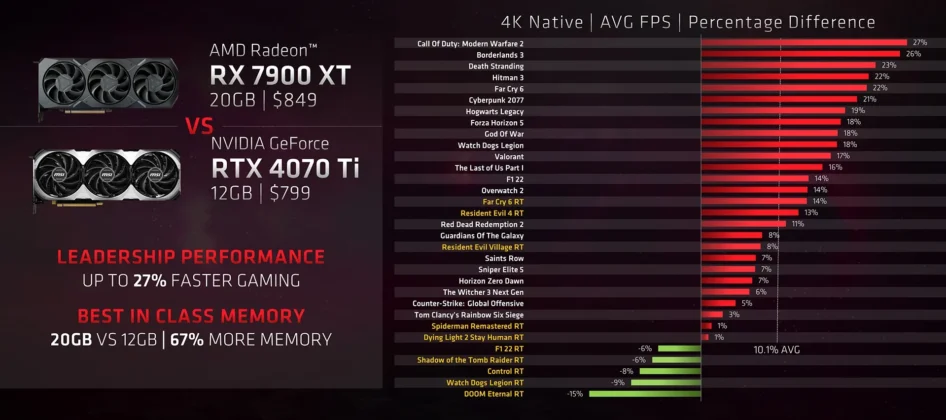

Above, you can see the graphics cards AMD recommends considering its last two GPUs (the RX 7900 XT 20GB and the RX 7900 XTX 24GB) over the RTX 4070 Ti 12GB and the RTX 4080 16GB taking into account the difference in the amount of memory.

While it is doubtful that any game will use 20GB or more of VRAM, let’s not forget that these GPUs could also be used for 8K in case of support for DLSS 3 or the promised FSR 3, but Nvidia boards could cut video memory in that hypothetical and timely case.

What are your thoughts on this new take from AMD on the importance of VRAM? Do you agree with the red camp? Should Nvidia consider increasing the VRAM, especially after this recent RTX 40-series graphics card price hike? Share your thoughts in the comment section below.

Thank you! Please share your positive feedback. 🔋

How could we improve this post? Please Help us. 😔

[Editor-in-Chief]

Sajjad Hussain is the Founder and Editor-in-Chief of Tech4Gamers.com. Apart from the Tech and Gaming scene, Sajjad is a Seasonal banker who has delivered multi-million dollar projects as an IT Project Manager and works as a freelancer to provide professional services to corporate giants and emerging startups in the IT space.

Majored in Computer Science

13+ years of Experience as a PC Hardware Reviewer.

8+ years of Experience as an IT Project Manager in the Corporate Sector.

Certified in Google IT Support Specialization.

Admin of PPG, the largest local Community of gamers with 130k+ members.

Sajjad is a passionate and knowledgeable individual with many skills and experience in the tech industry and the gaming community. He is committed to providing honest, in-depth product reviews and analysis and building and maintaining a strong gaming community.