- AI rendering technologies like DLSS frame generation can raise displayed frame rates even in CPU-limited scenarios.

- Benchmark methods often rely on outdated CPU-bound assumptions.

- CPU upgrades still matter for competitive esports and high refresh rate gaming.

- Frame generation can increase FPS dramatically, but it does not eliminate every CPU limitation.

For years, PC gamers have feared one phrase more than any other: CPU bottleneck. It shows up in forum threads, benchmark debates, and upgrade advice. If your processor is “holding back” your GPU, the logic goes, then you’re leaving performance on the table.

In 2026, that assumption is starting to break down. Modern games increasingly support AI upscaling and frame generation technologies that can boost performance without relying as heavily on the traditional CPU-to-GPU rendering pipeline. The result is a strange new reality: gamers can sometimes see large frame rate improvements without upgrading their processor at all.

The Rise of AI Frame Generation

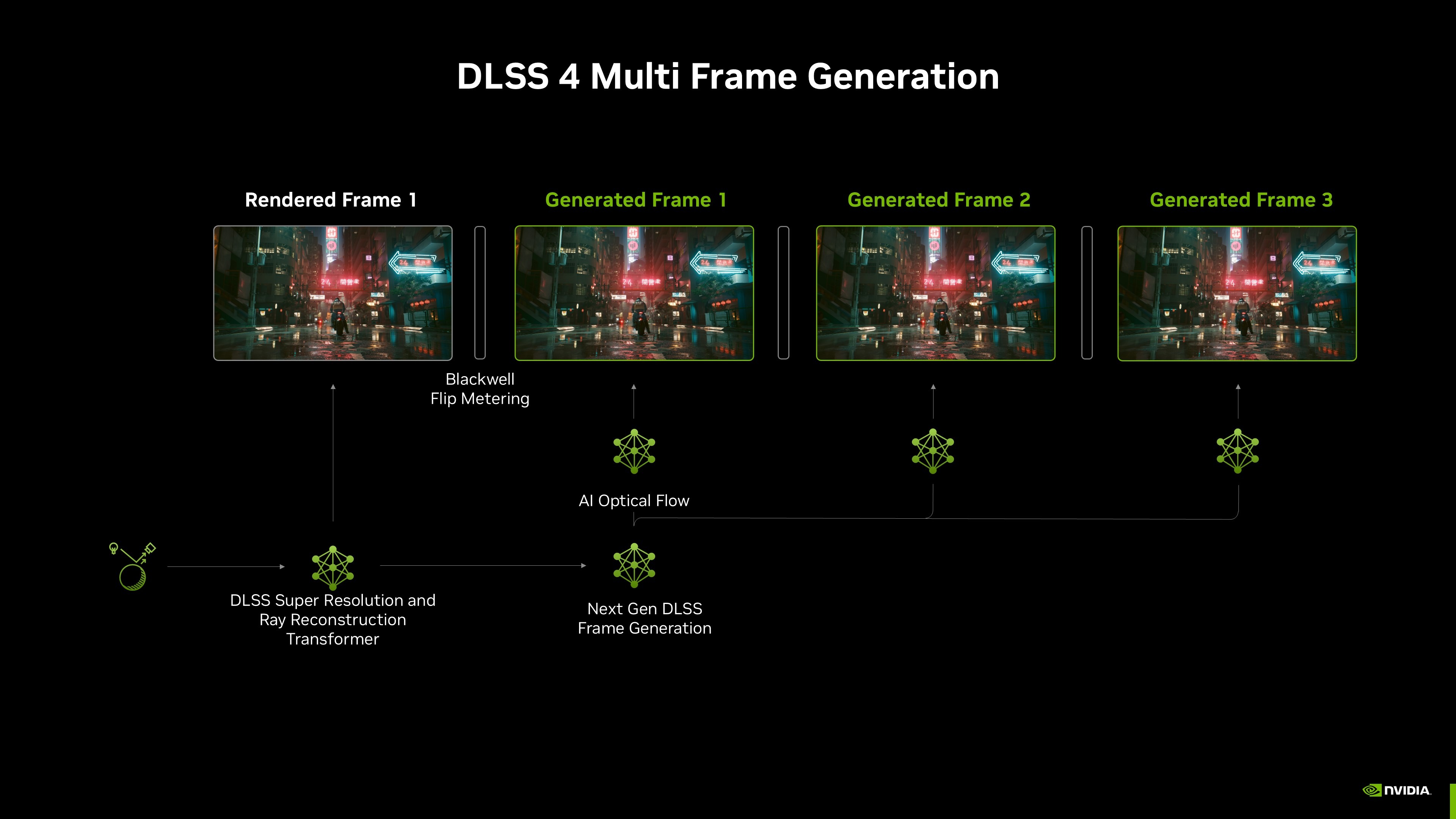

The shift became clearer around CES 2026, when NVIDIA continued expanding its DLSS ecosystem and emphasizing frame generation as a major part of modern PC graphics. AI-assisted rendering technologies are becoming a central part of how high frame rates are delivered on modern GPUs.

In simple terms, the GPU can now synthesize frames between traditionally rendered ones. AI models analyze motion data from previous frames and generate interpolated images that appear between them on screen. AI models analyze motion data from previously rendered frames and synthesize additional images that appear between them on screen.

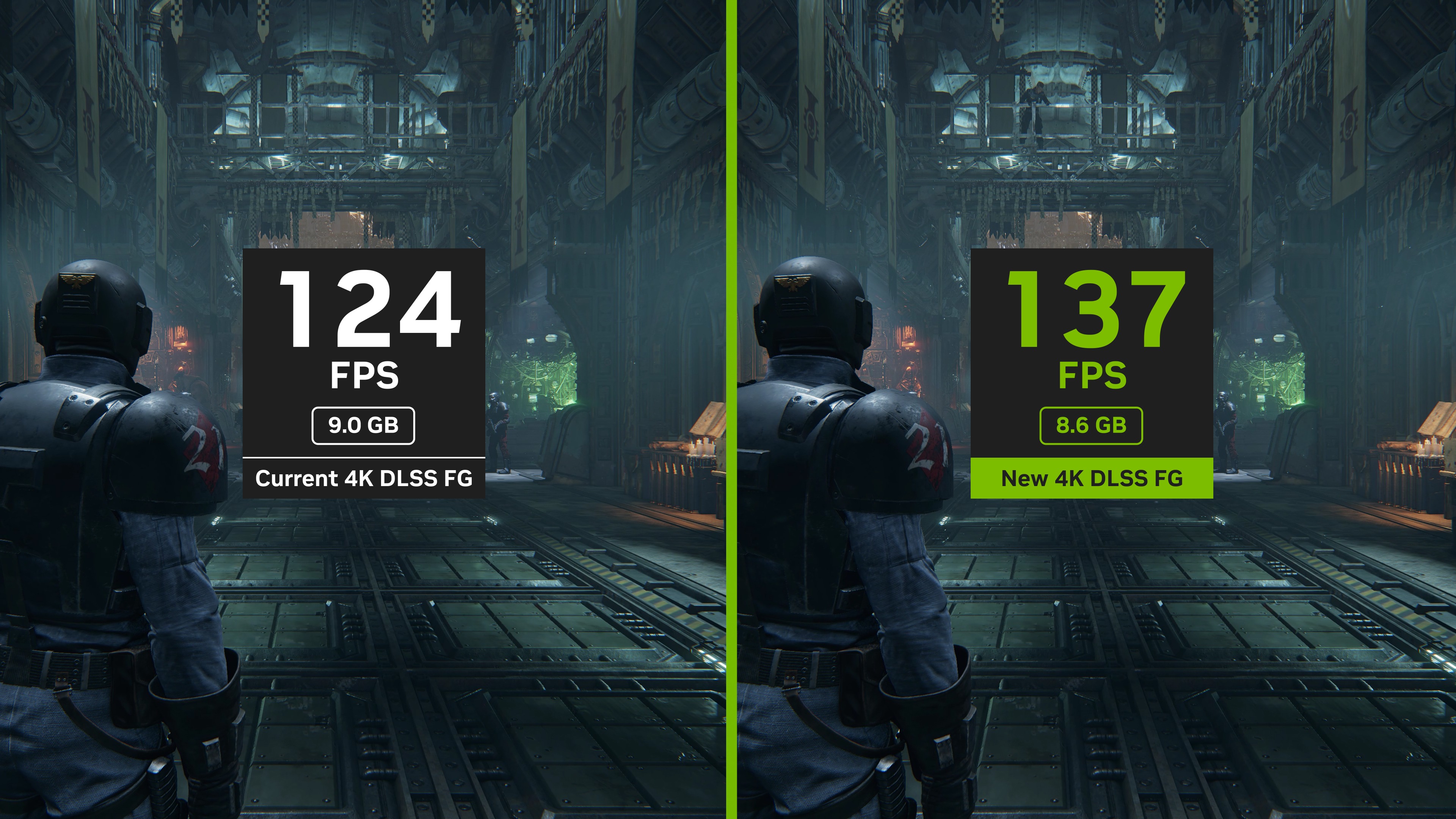

Hardware testing in early 2026 reinforced the impact, showing that enabling DLSS frame generation can dramatically increase displayed frame rates, even on systems paired with midrange or older CPUs. In some cases, GPUs scaled performance well beyond what traditional CPU limitations would normally allow.

At the same time, game developers have begun designing engines with AI-assisted rendering pipelines in mind. Many titles released in late 2025 and early 2026 ship with DLSS, FSR, or similar technologies available as optional graphics settings, allowing players to enable them depending on their hardware and performance targets.

This creates a new reality for PC gamers. The old advice about CPU bottlenecks is still technically true, but it does not always reflect how modern games actually behave.

Why CPU Bottlenecks Feel Different Now

The biggest impact is confusion. Gamers are seeing massive performance gains after enabling DLSS frame generation. A system that struggled to hit 70 FPS can suddenly report over 120 FPS. In the past, such scaling would almost certainly require a CPU upgrade.

But that does not necessarily mean the CPU got faster. It means the GPU is now doing more work on its own.

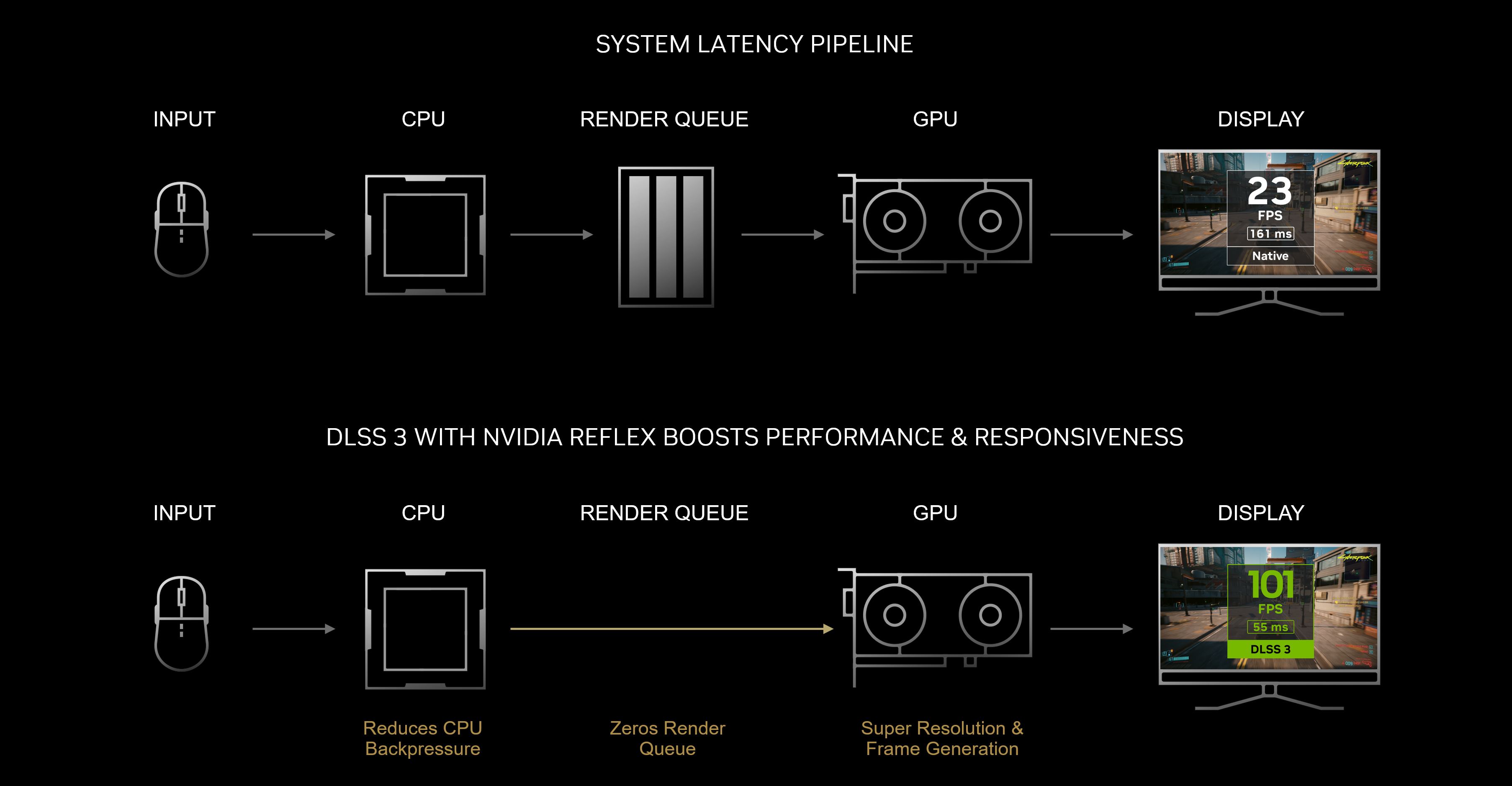

Traditional performance guidance assumed a simple pipeline. The CPU prepares each frame, the GPU renders it, and the frame appears on screen. In that model, a slow CPU can directly limit the number of frames produced per second.

AI frame generation changes that relationship. Once a base frame is rendered, the GPU can generate additional interpolated frames between real ones. This can raise the displayed frame rate even when the CPU limits the number of traditional frames produced.

For gamers, the takeaway is important. A system that once looked CPU-limited may now deliver excellent performance simply by enabling AI rendering features.

Rethinking CPU Bottlenecks in 2026

So what does a CPU bottleneck in gaming actually look like today? The answer is not as simple as it used to be.

If a game is heavily simulation-driven, the CPU still matters. Strategy games, large open-world simulations, and multiplayer titles with complex AI systems often rely heavily on CPU processing. In those cases, upgrading the processor can still unlock meaningful performance gains.

However, in GPU-heavy single-player titles with DLSS frame generation enabled, the CPU may become less critical to achieving high frame rates.

This is one reason many gamers report dramatic FPS improvements after enabling features like DLSS frame generation and similar technologies. The GPU effectively fills in the gaps between CPU-generated frames. That does not mean the CPU stops mattering. Instead, its influence on raw FPS numbers becomes less direct.

When CPU Upgrades Still Matter

Despite the changes, CPUs remain crucial in several scenarios. Competitive esports games are the clearest example. Titles like Counter-Strike, Valorant, and other high-refresh shooters rely heavily on low latency and fast frame delivery. Frame generation does not always help here because it can introduce additional input delay. In those situations, raw CPU performance still determines how quickly real frames are produced.

Another factor is latency sensitivity. Frame generation improves visual smoothness, but it does not accelerate the underlying simulation running on the CPU. If the CPU struggles to process game logic quickly enough, frame generation may mask the problem without fully solving it.

High refresh monitors also expose CPU limitations more clearly. At 240 Hz or above, even powerful GPUs often rely on fast CPUs to maintain consistent real frame delivery.

The Benchmarking Problem

One reason confusion persists is benchmarking. Many performance charts still test games in CPU-bound conditions, often at low resolutions like 1080p with powerful GPUs. This approach isolates CPU performance differences, which is useful for hardware comparisons.

But it does not always reflect how people actually play games today. In real-world scenarios, gamers often enable DLSS or other upscaling technologies, play at higher resolutions, and rely on GPU-driven features. In those cases, CPU bottlenecks appear less frequently than traditional benchmarks suggest.

This gap between lab testing and real gameplay is fueling ongoing debate across hardware communities.

My Take on Where Things Are Heading

The shift toward AI-driven rendering does not look like it will slow down anytime soon. GPU vendors are investing heavily in frame generation and neural rendering techniques, and game engines are beginning to integrate these features more deeply.

As these technologies mature, the relationship between CPUs and GPUs will continue to evolve. In my opinion, the key takeaway is fairly simple. CPU bottlenecks have not disappeared, but they are no longer the universal performance wall they once seemed to be. Sometimes they still matter. But in many modern games, I think they matter far less than they used to.

For PC gamers, understanding when each case applies may become one of the most important skills when navigating the next generation of gaming hardware.

Thank you! Please share your positive feedback. 🔋

How could we improve this post? Please Help us. 😔

[Comparisons Expert]

Shehryar Khan, a seasoned PC hardware expert, brings over three years of extensive experience and a deep passion for the world of technology. With a love for building PCs and a genuine enthusiasm for exploring the latest advancements in components, his expertise shines through his work and dedication towards this field. Currently, Shehryar is rocking a custom loop setup for his built.

Get In Touch: shehryar@tech4gamers.com